Nvidia DreamZero0: Why World Models May Become Policies

DreamZero0 is not just a stronger robotics benchmark. It is a serious argument for a new robotics stack.

Key points

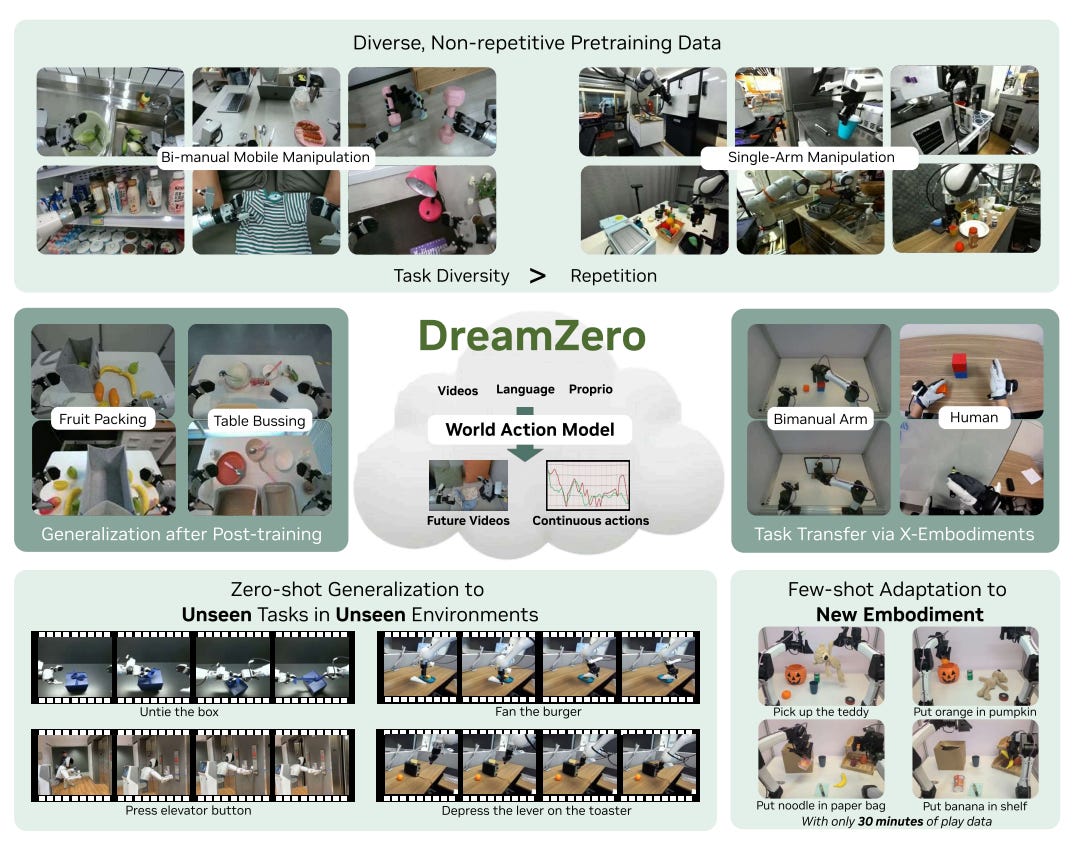

1. DreamZero changes the learning objective. It predicts future video and actions together. That is the core idea behind its World Action Model (WAM).

2. The bigger contribution is the data thesis. Broad, messy, non-repetitive robot data may be better for generalization than repeated demos of a narrow task set.

3. The transfer result matters. DreamZero improves from short video-only demonstrations from humans or another robot. Action labels may no longer be the only scarce resource.

4. This has direct startup implications. The next robotics moat may come less from narrow task tuning and more from world-model quality, diverse operational data, sensor standardization, and inference systems.

5. The paper is impressive, but not magic. The model is still short-horizon. It is expensive to serve. High-precision manipulation is still open.

Why this paper matters

I wanted to start this Substack with DreamZero because it makes a claim that feels bigger than a benchmark win.

Many recent robotics foundation models still follow the same recipe: images and language in, actions out. Those models can improve semantic understanding and object coverage. But they often still fail when the required motion is new. They fall back to the dominant action prior in the dataset: grasp, move, place.

DreamZero argues for a different path.

Its core claim is simple: a robot policy may generalize better if it is trained to model how the world should change, not only which action to emit next.

That shift matters because robotics is not only a semantics problem. It is also a dynamics problem. A robot must understand not only what an object is, but how the scene evolves under contact, motion, error, and recovery.

From VLA to WAM

The paper introduces DreamZero as a World Action Model rather than a standard Vision-Language-Action model.

A standard VLA is trained in a direct way:

observation + language -> action

DreamZero changes this to something closer to:

observation + language -> future world + action

The model imagines the next visual states of the world while also producing the actions that would make those states happen.

This matters for one reason: future video is dense supervision.

Every future frame constrains geometry, object motion, contact, temporal consistency, and scene evolution. That is a much richer signal than action labels alone. The action head is not learning in isolation. It is learning inside a model that must maintain a plausible visual future.

This is the strongest idea in the paper. It makes the old phrase “world models can become policies” feel like a practical design choice.

Technically, the system is large. DreamZero uses a 14B robot foundation model built on a pretrained Wan video-diffusion backbone, with robot-specific state and action modules added on top. The key point is the design principle: start from a strong video model, keep its spatiotemporal prior, and align robot action with predicted world evolution.

The result that matters most: unseen motion generalization

The headline numbers are strong. But the value is in what they mean.

DreamZero performs well not only on seen tasks in new environments, but also on tasks that were absent from training. That is the hard case. Many models look competent when the object changes or the wording changes. Fewer models keep working when the motion pattern itself changes.

The paper reports that on AgiBot G1, DreamZero reaches 62.2% average task progress in zero-shot evaluation on seen tasks with unseen environments and objects. A strong pretrained VLA baseline reaches 27.4%. On 10 tasks not present in training, DreamZero reaches 39.5% average task progress, while VLA baselines drop sharply and often revert to generic pick-and-place behavior.

This is the right failure mode to focus on.

If a model hears a new instruction and responds with a familiar manipulation template, that is not robust generalization. It is action prior collapse. DreamZero appears to reduce that problem.

The deeper thesis is about data, not architecture

The most important part of the paper may be the data recipe.

DreamZero is pretrained on about 500 hours of teleoperation data across 22 real environments. The key point is not only the scale. It is the distribution.

The data is intentionally non-repetitive.

Instead of collecting many clean repetitions of the same task, the team collects long episodes with many coarse tasks and many transitions between them. Tasks are rotated out after enough collection, which pushes the dataset toward breadth and long-tail coverage.

This is an important claim.

A lot of robot data collection still assumes that better policies come from cleaner labels and more repeated demonstrations. DreamZero suggests that for world-model-style policies, repetition may be less valuable than diversity. The model benefits from seeing many kinds of scene changes, many kinds of interaction failures, and many kinds of partial task structure.

The paper includes a clean ablation. With the same amount of data, DreamZero trained on diverse data outperforms DreamZero trained on repetitive data, improving task progress from 33% to 50% on the same evaluation.

If this result holds more broadly, it changes how we should think about data moat.

The most valuable robotics dataset may not be the cleanest benchmark dataset. It may be the operational dataset with the widest support over real work: tidying, carrying, folding, wiping, sorting, recovering, and switching tasks inside the same episode.

What this means for startups and companies

DreamZero changes the answer to a basic question:

What should a robotics company scale?

Under the standard VLA view, the answer is often: collect more action-labeled demos across more tasks.

Under the DreamZero view, the answer shifts. A company should try to scale:

broad multi-view interaction data,

enough action data to ground control,

strong world-model pretraining,

and a deployment stack that can serve a large generative policy in closed loop.

That has three clear implications.

First, data strategy changes. A company in logistics, retail, hospitality, or home robotics may gain more by collecting diverse workflow fragments than by over-optimizing one benchmark skill.

Second, video becomes more valuable. If video-only demonstrations can improve performance, then human demonstration video and cross-fleet recordings become strategic assets.

Third, systems engineering becomes part of the moat. If the policy is also a large generative video model, then inference speed, GPU scheduling, quantization, caching, and asynchronous execution all become first-class product concerns.

This is why DreamZero matters for investors as well as researchers. It points to a different stack, and therefore a different kind of company.

The transfer story matters

The cross-embodiment section is the most strategic part of the paper.

DreamZero improves unseen-task performance using short video-only demonstrations from another robot and from humans. Those demonstrations do not include action labels. They only provide visual evidence of task dynamics.

If a world model already captures much of the task structure, then video can teach the model what successful interaction should look like even when the action interface changes. In the paper, just 20 minutes of video from another robot or 12 minutes of human video substantially improves performance on unseen tasks.

The paper also shows few-shot adaptation to a new bimanual robot with around 30 minutes of play data.

I would not overclaim here. This is not proof that transfer across radically different robots is solved. But it is strong evidence that the world prior may transfer faster than the action interface.

Two caveats that matter

The first caveat is inference cost.

DreamZero is a large video-diffusion policy. That is inherently expensive. The paper reaches about 7 Hz closed-loop control through a long stack of optimizations. That is impressive. It is also a reminder that WAMs are not just a modeling choice. They are an infrastructure choice.

The second caveat is task horizon and precision.

The paper is fairly explicit that DreamZero is still closer to a short-horizon “System 1” model than a long-horizon planner. It also does not solve fine, high-precision manipulation. Tasks like tight insertion or very delicate contact remain difficult.

So I do not read this paper as “VLAs are obsolete.” I read it as something more useful: the motion-generalization frontier has moved, and direct action regression is no longer the only serious path.

Final take

My main takeaway is direct.

DreamZero matters because it proposes a better scaling story for robotics.

The paper suggests that predicting the world may be a better route to robust action than predicting actions alone. It also suggests that broad and messy real-world data may be more valuable than narrow repeated demos. And it hints that video-only transfer may become a real lever for embodiment adaptation.

If those claims continue to hold, the implications are large.

The value of diverse precise robot data goes up.

The value of human video goes up.

The value of sensor standardization goes up.

The value of inference engineering goes up.

And the value of narrow demo farms may go down.

That is why I think DreamZero is one of the most important robotics papers in this cycle.

Not because it solves general robotics.

But because it changes what a scalable solution might look like.